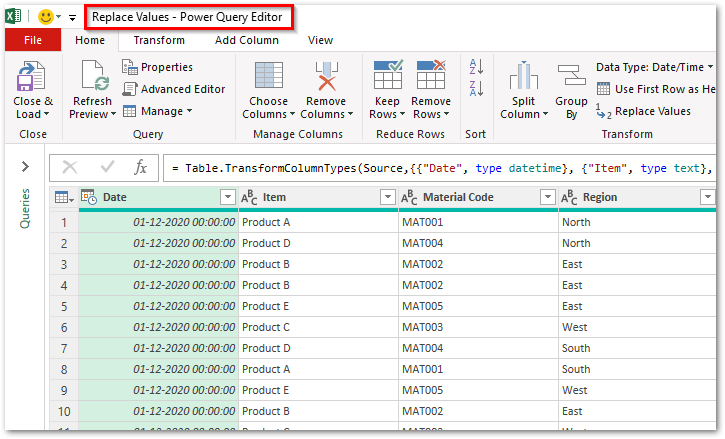

create an alias or function to use multiple commands.use alternative tools (as suggested in other answers),.use different kind of compression (e.g.Therefore the file cannot be FIFO, pipe, terminal device or any other dynamic, because the input object cannot be positioned by the lseek function. It is located at the end of the archive structure and it is required to read the list of the files (see: Zip file format structure). This is, because the standard zip tools are mainly using lseek function in order to set the file offset at the end to read its end of central directory record. The proper syntax would be: $ unzip 2 GiB) without large file support bsdtar and bsdcpio) can and will do so when reading through a pipe, meaning that the following is possible: wget -qO- | bsdtar -xvf. In addition, individual entries also include this information in a local file header, for redundancy purposes.Īlthough not every ZIP decompressor will use local file headers when the index is unavailable, the tar and cpio front ends to libarchive (a.k.a. The directory at the end of the archive is not the only location where file meta information is stored in the archive. As such it appears unsurprising that most ZIP decompressors simply fail when the archive is supplied through a pipe. It turns web data scattered across pages into structured data that can be stored in your local computer in a spreadsheet or transmitted to a database.

This would appear to pose a problem when attempting to read a ZIP archive through a pipe, in that the index is not accessed until the very end and so individual members cannot be correctly extracted until after the file has been entirely read and is no longer available. Web scraping (also termed web data extraction, screen scraping, or web harvesting) is a technique of extracting data from websites. This directory says where, within the archive each file is located and thus allows for quick, random access, without reading the entire archive.

The ZIP file format includes a directory (index) at the end of the archive. This is a repost of my answer to a similar question: Fminer wait download help Using the next steps, we will extract data from this webpage) Here we will extract every place listed in the page and extract the description and the user reviews posted for every page) The function gethtmltosoup accepts a generic URL as input and returns the beautiful soup object.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed